Grade components, not browsers

April 2020 note: Hi! Just a quick note to say that this post is pretty old, and might contain outdated advice or links. We're keeping it online, but recommend that you check newer posts to see if there's a better approach.

Throughout the short history of the web, declarations of browser support have gone through a number of popular phases. Early approaches were often defined by exclusion, a la “best viewed in Netscape 4.” Thankfully, more inclusive ways to define browser support (like Yahoo’s Graded Browser Support, detailed below) helped move the web beyond a focus on individual browsers towards a broader cross-browser system. However, given how much has changed in browsers and devices in recent years, do the ways we talk about support today still accurately reflect the ways in which we build for the cross-device web?

Graded Browser Support

Permalink to 'Graded Browser Support'In 2006, Yahoo released their revolutionary Graded Browser Support (GBS) documentation, perhaps the first declaration of browser support that embraced the diversifying web. In the rationale for GBS, Yahoo argued for a support system based on the practice Progressive Enhancement:

Support does not mean that everybody gets the same thing. Expecting two users using different browser software to have an identical experience fails to embrace or acknowledge the heterogeneous essence of the Web.

…and then went on to back that methodology with tiered support categories:

…we define grades of support. There are three grades: A-grade, C-grade, and X-grade support.

Under GBS, these browser grades represent broad definitions of browser capabilities:

- “C-grade is the base level of support, providing core content and functionality. It is sometimes called core support.”

- “A-grade support is the highest support level. By taking full advantage of the powerful capabilities of modern web standards, the A-grade experience provides advanced functionality and visual fidelity.”

- “X-grade provides support for unknown, fringe or rare browsers as well as browsers on which development has ceased. Browsers receiving X-grade support are assumed to be capable.”

As an aside, one might argue that there are really only two categories here, with “X” representing uncategorized browsers that are assumed to be “A”. But, moving on! Yahoo’s system beautifully codified the way many of us were beginning to think about support at the time, and it continues to shape our notion of browser support today, for better and worse.

The Red Pen

Permalink to 'The Red Pen'Despite broad adoption of the system, implementing GBS introduced questions about just how to go about enforcing these somewhat subjective grades. For example, how should we ensure that a B-Grade browser is not delivered A-Grade features? Or, what if a B-Grade browser can handle several A-Grade features, should we hold it back from receiving them?

While proponents of feature-detection surely have plenty of good answers to these questions, some lesser-discussed portions of GBS display Yahoo’s take on the matter:

- “C-grade browsers should be identified on a blacklist.”

- “A-grade browsers should be identified on a whitelist.”

Wait… whitelists and blacklists? A progressive-enhancement-based grading system that recommends pre-categorizing browsers with device detection? That can’t be right…

Call that progressive?

Permalink to 'Call that progressive?'While there are cases where device detection is needed as a fallback for properly detecting certain features today, it isn’t the most sustainable or future-friendly way to deliver broad experience divisions. Besides, graded experiences are exactly what Progressive Enhancement and feature detection are meant to provide on their own. As such, the browser-targeting portions of GBS have long felt a little out of touch with a field striving for more self-sustainable means of serving its users.

More importantly though, assigning A and B grades to the right browsers seems to be a relatively small problem compared to the sustainability of using broad browser categorization in the first place. After all, broadly categorizing browsers based on their vintage or device’s form factor assumes a heck of a lot about the shared aspects of browsers within a category, and it risks ignoring how much is truly shared across browsers in separate categories as well. Imagine serving features based on the assumption that “mobile,” “tablet,” and “desktop” browsers have predictable and exclusive feature sets… ahem.

Look Ma, all A’s!

Permalink to 'Look Ma, all A’s!'Perhaps the greatest problem with graded browser support is that it lacks useful granularity. More and more, the browsers that we’re encountering most frequently are A-Grade, and there’s an entire alphabet of gradable features within that group alone. Here at Filament, we’ve long utilized a “Cut the Mustard” diagnostic test to make a clean break between the B and the A browsers, but that’s often just the first step in the workflow of delivering features appropriately.

Given the speed at which technologies are now being implemented in browsers, it’s unhelpful to compare them in these broadly-defined categories that represent “good” and “bad;” it’s more complicated than that. Increasingly, our support landscape looks far more like a scatterplot than a scale, and our definition of support needs to evolve to match that new reality.

A Modernized Definition of Support

Permalink to 'A Modernized Definition of Support'At Filament Group these days, we try to build our sites out of modular, independent components, and we document them for our clients that way as well.

In taking a component-driven approach to building websites, we’ve found that the browser features required to offer an A-Grade experience of a particular component become much easier to document. For example, a data visualization component might require HTML5 Canvas to be enhanced to its best experience, but if Canvas isn’t supported, that component will work just fine as a simple HTML table.

Further, we’ve noticed that a particular device may support a mix of A and B grade features based in it’s unique capabilities. We need a better way to declare that this mix of grades is both expected and normal.

Grade components, not browsers

Permalink to 'Grade components, not browsers'To properly define and set expectations for how our sites should work across browsers and devices both now and in the future, we’ve made a simple change to the way we define browser support: We no longer grade browsers – instead, we grade components.

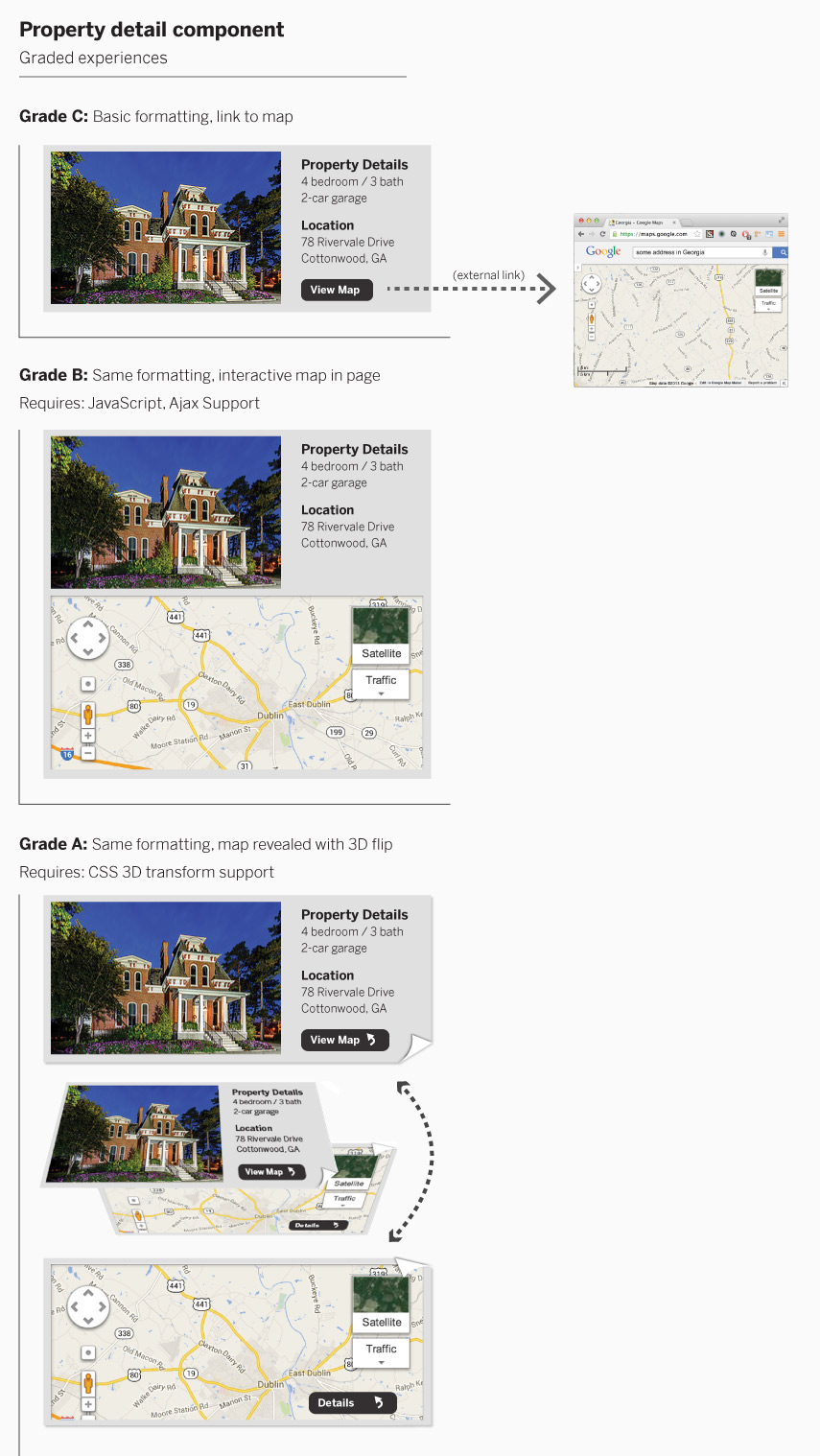

Most every component we build has a basic and an enhanced experience, though many have one or more middle levels of enhancement as well. The level of enhancement that each component receives is simply based on the features that are supported by a browser (and when necessary we qualify those enhancements with feature detection). Here’s an example of a fictitious property detail component:

Keep in mind that these grades can be based on behavior as well, and not just visual presentation. For example, the navigation component of an application might offer a B-Grade experience that simply links between separate pages, while its A-Grade experience might fetch an external page with Ajax and update the browser’s URL to reflect the new page being shown (requiring several features like history.pushState to do so).

Now, we should note that we’ve chosen a lettering system because, well, it’s a nice shorthand and is already familiar. Alternatively, a numbering system might actually make more sense, with 1 representing the simplest version of a component and any number of versions progressing from there. Also, it’s worth keeping in mind there may be times where upgrades aren’t linear, but we find that they often can be described that way. The important thing is the enhancement system isn’t always a binary matter across an entire application - it’s more granular.

With graded components, we make experience divisions at the component level rather than enhancing or degrading a browser’s entire experience . In the end, grading components rather than browsers allows us to document and follow through with the goal of delivering the most appropriate experience to each and every browser and device.